Çözünürlük & Sıkıştırma

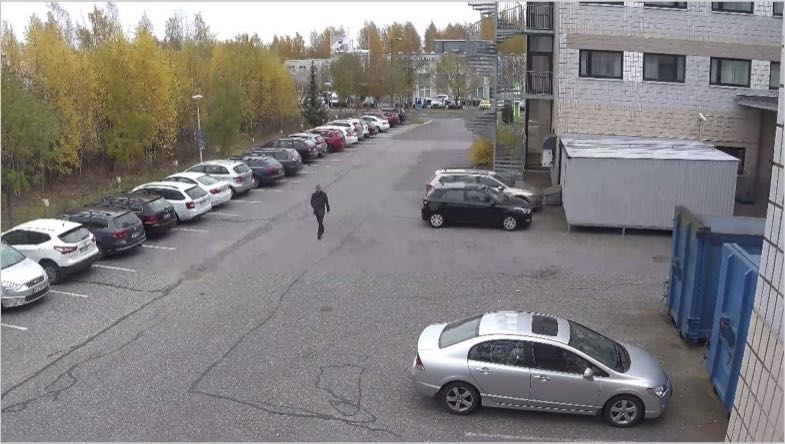

Aşağıdaki 2 görüntünün çözünürlüğü nedir?

Çözünürlüğü görüntünün çerçeve boyutu olarak olarak tanımlıyorsak her iki görüntü de 3840 x 2160 (4K) çözünürlükte çekilmiştir. Bir tanesi asıl resmin 64 kere sıkıştırılmasıyla diğeri ise 266 kere sıkıştırılmasıyla elde edilmiştir.

Çözünürlüğü görüntünün çerçeve boyutu olarak olarak tanımlıyorsak her iki görüntü de 3840 x 2160 (4K) çözünürlükte çekilmiştir. Bir tanesi asıl resmin 64 kere sıkıştırılmasıyla diğeri ise 266 kere sıkıştırılmasıyla elde edilmiştir.

Görüntülü güvenlik sistemlerinde çok sayıda kamera kullanıldığından bant genişliği ve depolama alanı, sistemin çalışması ve ekonomisi açısından birinci öncelik haline gelmektedir. Bu nedenle sistemi ekonomikleştirebilmek için firmalar, daha düşük bant genişliği ve depolama alanını ancak daha yüksek sıkıştırmayla elde edebilmektedirler. Daha yüksek sıkıştırma bir sonraki resimde görebileceğiniz farklılıkları ortaya çıkarmaktadır. Sıkıştırılmış görüntüler daha az bant genişliği ve daha az depolama alanı gerektirir. Fakat; İlk bakışta aynı gibi görünen bu görüntüler arasındaki farkı sayısal olarak yakınlaştırdığımızda ortaya çıkacaktır.  Sağdaki resim soldakine göre daha az sıkıştırılmıştır. Bu nedenle sayısal olarak büyütüldüğünde ayrıntıları görülebilmektedir. Sıkıştırma bant genişliği ve depolamayı düşürmektedir. Ancak bunun yanında kaliteyi de düşürmektedir. Görüntülü güvenlik sistemlerin de kayıttan izlenen görüntü kaydedilen toplam görüntünün %3’ünü oluşturmaktadır. Bu sistemler için önemli olan; bir olay sonrası görüntü gerektiğinde yeterince ayrıntı alabiliyor musunuz? Bu soruya olumlu yanıt verebilmek için en iyi kaliteyi en düşük bant genişliği ile yakalamak aslolandır.

Sağdaki resim soldakine göre daha az sıkıştırılmıştır. Bu nedenle sayısal olarak büyütüldüğünde ayrıntıları görülebilmektedir. Sıkıştırma bant genişliği ve depolamayı düşürmektedir. Ancak bunun yanında kaliteyi de düşürmektedir. Görüntülü güvenlik sistemlerin de kayıttan izlenen görüntü kaydedilen toplam görüntünün %3’ünü oluşturmaktadır. Bu sistemler için önemli olan; bir olay sonrası görüntü gerektiğinde yeterince ayrıntı alabiliyor musunuz? Bu soruya olumlu yanıt verebilmek için en iyi kaliteyi en düşük bant genişliği ile yakalamak aslolandır.

SAYILARIN OYUNU

- Herkes en yüksek çözünürlüğü ve en düşük bant genişliğini elde etmek için sayı yarışına başladı. Ancak sayıların gerçekten ne anlama geldiği anlaşıldıkça sistem tasarımları gittikçe zorlaşıyor.

Görüntü çözünürlüğünü etkileyen kamera etkenleri:

|

Görüntü çözünürlüğünü etkileyen iletim etkenleri:

|

Görüntü çözünürlüğünü etkileyen ekran etkenleri:

|

En iyi algılayıcı, lens, iletim sistemi, ekranlar ve izleme için kullanılacak bilgisayarların sürekli çalışmaya uygun olması, sistemin çözünürlüğüne en uygun ekran çözünürlüğü sunması en iyi sonucu üretmektedir. Bunları başarırken de sistemin ekonomik olmaktan çıkmasının önlenmesi gerekmektedir.

- Bir çok üretici şu anda 4K çözünürlüklü kameralar üretmektedir. Ancak aynı kameraların lenslerine baktığınızda ise kameralara uygun lensi kullanan üreticiyi bulabilmeniz için epeyce aramanız gerekmektedir. Genelde 4K kameralarda 6MP veya daha düşük çözünürlüklü lensler kullanılmaktadır.

- Sony çözünürlüğü 4K’dan daha yüksek kaliteli lensler ile 4K kamera sunan tek üreticidir.

Etkisi Nedir?

- Lensin en iyi görüntü üreten yeri merkezdir. Bu yüzden 10MP'lik bir kameraya iyi bir 3MP lens takarak, merkez güzel ve keskin olabilir, ancak kenarlara doğru baktığınızda keskinlik azalır.

- Ancak bunun olumlu bir tarafı var… daha düşük çözünürlük = daha düşük bant genişliği…

MBP=PPM:1m’deki piksel sayısı) (Türkçesi için önerimiz mbp: Metre Başına Piksel)

Açık alanda bir güvenlik kamerasının görebildiği mesafenin sınırı yoktur. Mesafeler uzadıkça nesneler arasındaki açıklık azalır ve birbirinden ayrılamaz hale gelir. Bu durum izleyicinin ayırt etmesini engeller. Önemli olan kamera lens birleşimidir. Örneğin; herhangi bir CS çerçeveli kamerayı bir teleskopun arkasına bağlarsanız Jüpiteri bile gözlemleyebilirsiniz. Burada belirleyici olan kamera-lens birleşiminin izleyicinin görmek istediği nesneyi veya alanı ne kadar iyi görüntüleyebildiğidir. Görüntülü güvenlik sistemlerinde bahse konu nesnenin veya alanın görülebilirliği metre başına düşen piksel değeri ile ölçülmektedir. Kameranın tipi ve lensin odak uzaklığı görüş alanını belirler(FoV). Kameranın görüntülenmek istenilen nesneye olan uzaklığı ise nesnenin kaç piksellik görüntü oluşturacağını belirler. Örneğin; 2MP’lik bir kamera ve lens birleşimi ile bir arabayı bütün ekranı kaplayacak şekilde çektiğimizi varsayarsak ortalama 1.8m genişliğe sahip araba 1920 piksellik bir görüntü içerisine yerleşmiş olur. Bu durumda 1920/1,8= 1.067mbp (ppm) değeri çıkar. Yani yatayda 1m’lik görüntüyü oluşturmak için 1.067 piksel kullanılmıştır. Bu değere mbp (metre başına piksel)(ppm) denir. Formülü:

mbp(ppm)= Yatay Piksel Sayısı ÷ Görüş Alanı Yatay Uzunluğu

Yukarıdaki formüldeki yatay piksel sayısı kullanılan kameranın yataydaki piksel değeridir(Tam HD 1920x1080 olan bir kamerada 1920piksel, 4K 3840x2160 olan bir kamerada 3820pikseldir.). Görüş alanı yatay uzunluğu ise hesaplanabilir. Ortalama her kamera ve lens üreticisinin bu tür hesap makineleri vardır.

Kameranın algılayıcısı da “Görüş Alanı” hesaplarında etkendir. Bu nedenle görüş alanı hesaplanırken üreticilerin hesap makinelerini kullanmakta yarar vardır.

Açıklayıcı bir örnek daha: 4 milimetrelik lens ile bakan 2 megapiksellik bir kamera 10. metrede 13,42x7,55m’lik bir alanı görüntüler. Tam 10.metredeki mbp(ppm) yoğunluğu 1920/13,42=143mbp (ppm) olacaktır. 5. metrede bu değer 287mbp (ppm). 3.metrede 477mbp (ppm) olacaktır.

|

278mbp (ppm) |

139mbp (ppm) |

69mbp (ppm) |

34mbp (ppm) |

|

|

|

|

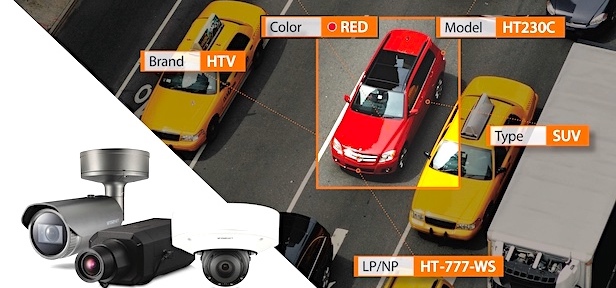

mbp (ppm) değerinin görüntü üzerindeki etkisini aşağıdaki resimlerde gösterilmiştir. mbp(ppm) değerinin ayak (foot) cinsinden birimi de ppf(pixel per foot) olarak tanımlanmaktadır. mbp (ppm) Hesaplaması kuramsaldır ve kameranın belirtilen çözünürlüğü verdiğini varsayar (örneğin 1920x1080). Özellikle yüksek çözünürlüklü bir kameraya daha düşük çözünürlük desteği olan bir lens takılması, kameranın doğru görüntüyü üretememesinin başlıca nedenlerindendir. Bu durum, hesaplanan mbp(ppm) değerinin monitörde oluşmamasına neden olur.

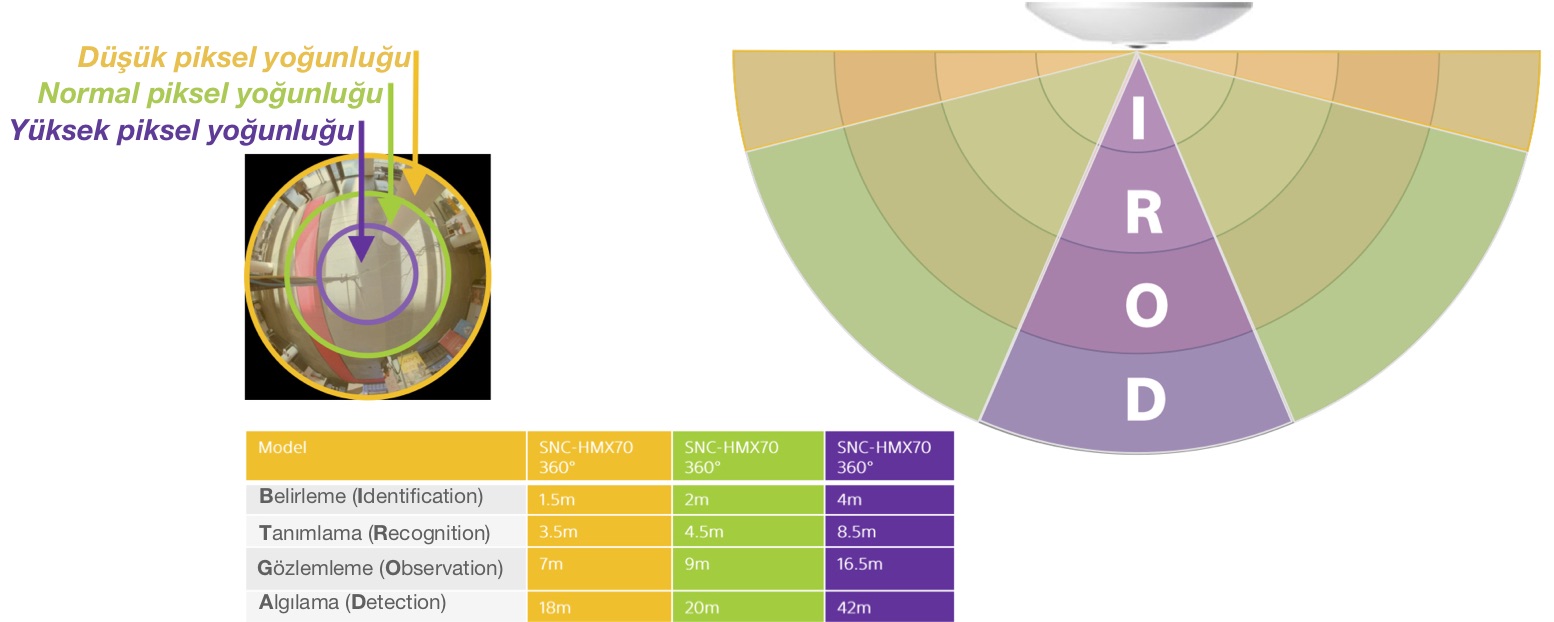

DORI (AGTB)

- DETECT (Algılama) - (25ppm) Bir kişinin varlığını ve nasıl hareket ettiğini belirleme

- OBSERVE (Gözlemleme) - (63ppm) Bir kişinin ne yaptığını gözlemleme

- RECOGNISE (Tanıma) - (125ppm) Bir kişiyi tanımlama - hareket, giysi, davranış

- IDENTIFY (Belirleme) - (250ppm) Bir kişiyi açıkça tanımlama

DORI (Türkçesi için önerimiz: AGTB) 1 metrelik görüntü başına yatay piksel sayısı (mbp = ppm) ile tanımlanır ve EN-50132-7 standardından türetilmiştir.

DORI (Türkçesi için önerimiz: AGTB) 1 metrelik görüntü başına yatay piksel sayısı (mbp = ppm) ile tanımlanır ve EN-50132-7 standardından türetilmiştir.

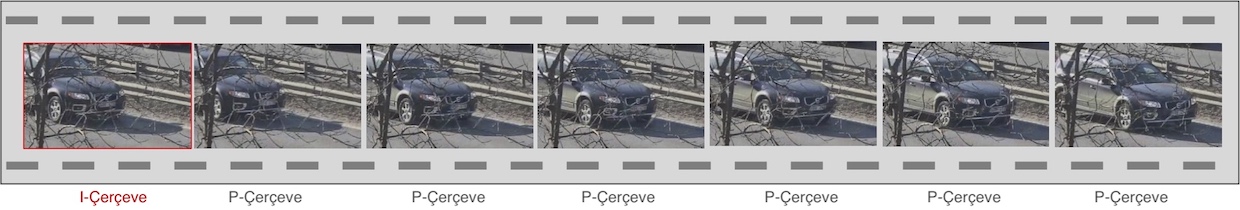

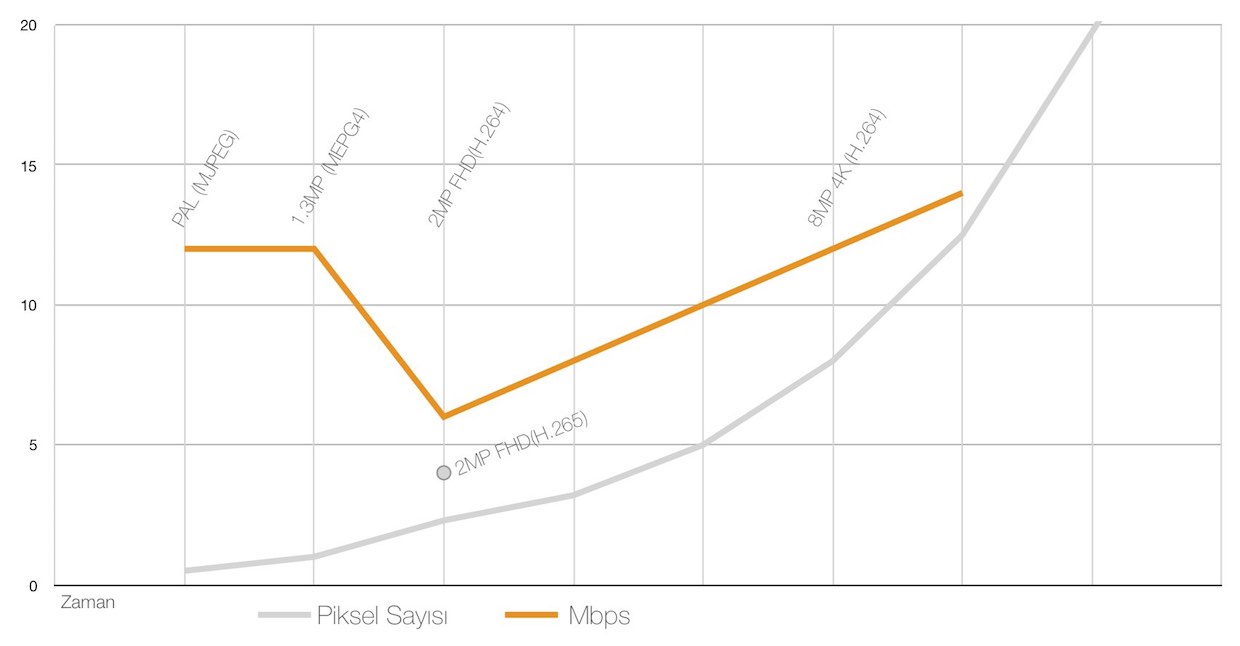

GÖRÜNTÜ SIKIŞTIRMA

Tam HD'de (1920 x 1080) sıkıştırılmamış görüntü akışı 1.4Gbps'dir. Bu bant genişliği kapalı devre kamera sistemleri ve uzaktan izleme sistemlerini uygulanamaz hale getirir. H.264 Sıkıştırma sayesinde 25 fps hızda iyi görüntü kalitesi elde etmek için 1 ~ 8 Mbps* arasında bir bant genişliği yeterli olmaktadır. H.264 sıkıştırma, akıllıca tasarlanmış kodlama teknikleri içermesine karşın bir çok verinin de kaybolmasına neden olur.  MPEG-4 ve H.264, video için gereken veri miktarını önemli ölçüde azaltmak için I ve P çerçeveleri kullanır. Basit olarak, I çerçeveler JPEG fotoğraflarına benzer şekilde sıkıştırılmış tam bir görüntüdür, P çerçeveler ise sadece I çerçeve ve bir sonraki görüntü arasındaki farkları içerir. Yani resimde hiçbir şey değişmezse, p çerçevelerinin oluşturduğu veri miktarı çok küçük olacaktır. Ancak yoğun hareketli bir sahnede P çerçevelerinin oluşturacağı veri miktarı da büyük olabilir.

MPEG-4 ve H.264, video için gereken veri miktarını önemli ölçüde azaltmak için I ve P çerçeveleri kullanır. Basit olarak, I çerçeveler JPEG fotoğraflarına benzer şekilde sıkıştırılmış tam bir görüntüdür, P çerçeveler ise sadece I çerçeve ve bir sonraki görüntü arasındaki farkları içerir. Yani resimde hiçbir şey değişmezse, p çerçevelerinin oluşturduğu veri miktarı çok küçük olacaktır. Ancak yoğun hareketli bir sahnede P çerçevelerinin oluşturacağı veri miktarı da büyük olabilir.

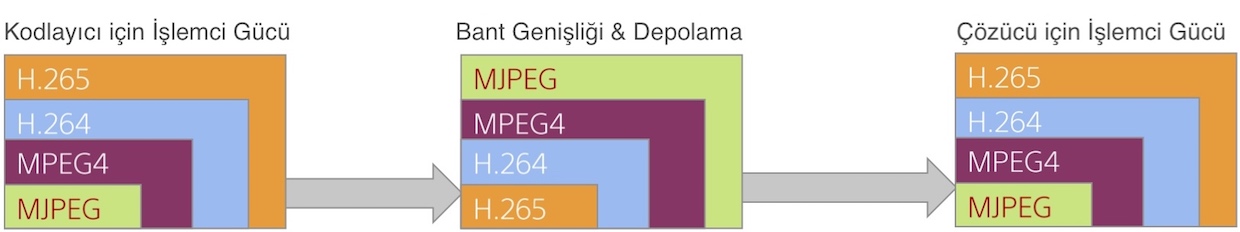

MPEG4, H.264 ve H.265 Kodlamalarının Yararları

Değişken bit hızı ile çalışan kodlamalarda video akışının oluşturcağı veri miktarı, görüntüdeki ayrıntıya ve etkinliğe bağlıdır. Daha fazla ayrıntı ve daha fazla hareket, bant genişliğini artırır. Gri renkli hareket olmayan bir koridordaki p çerçevelerinin veri miktarı çok düşük olur. Buna karşın bir alışveriş merkezindeki hareketli kameranın hareket anında üreteceği p çerçevelerinin veri miktarı ise çok yüksek olacaktır. * Görüntüdeki hareket yoğunluğu, paket sıkıştırması kullanma gibi etkenler veri miktarını etkiler. Bu bilgilerden hareketle çok az etkinlik olan veya hiç olmayan alanlarda yüksek sıkıştırma kodlamalarının büyük yararlar sağlayacağı söylenebilir. Ancak, yoğun bir alışveriş merkezini veya otoyol kavşağını görüntülerken, yakalanan ayrıntıların önemli olduğu daha az sıkıştırma tercih edilebilir. Ayrıca daha yüksek sıkıştırmalı kodlamalar, kare kare oynatmayı ve adli analiz için ters oynatmayı zorlaştırır. Çünkü kare kare oynatma I çerçevesi gerektirir ve I çerçeveler arası uzadıkça izlenilen görüntüden elde edilen ayrıntılar azalacaktır. Her bir sıkıştırma sisteminin getirileri vardır ve zaman içinde aygıtlarda(kamera, kaydedici, kodlayıcı ve kod çözücü gibi) bulunan daha fazla işlem gücü daha gelişmiş kodlama algoritmalarına izin verir. Götürüsü ise kodlayıcı (kamera) ve kod çözücüde (istemci PC veya çözücüler ) daha fazla işlem gücüne ihtiyaç duyulmasıdır. MPEG4 yerine H.264 geldiğinde, birçok firma ve kuruluş istemci bilgisayarların yükseltilmesi gerektiğini öğrendiklerinde standardı benimseme konusunda yavaş davrandı. Aynı durum bugün H.265 için de geçerlidir. İşleyişin grafik gösterimi aşağıdadır.

Not: Daha karmaşık kodlama daha fazla işlem gerektirir ve bu nedenle gecikme süresi daha da uzar.

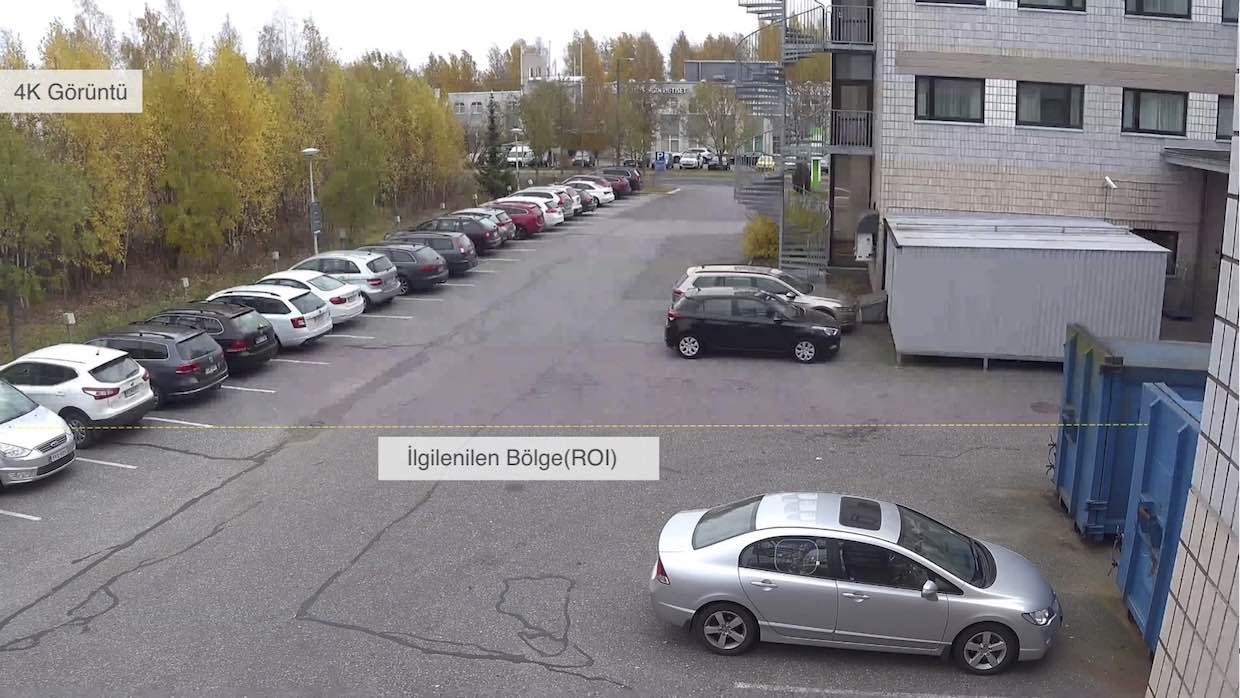

AKILLI KODLAMA

Artık içeriğe bağlı olarak video sıkıştırmasını optimize eden akıllı H.264 kodekleri mevcut. Aşağıdaki teknikler kullanılır:

- Düşük ışıklı sahnelerde agresif gürültü azaltma.

- Sıkıştırma düzeylerini belirlemek için nesne algılama.

- İlgilenilen bölgenin (RoI) kodlanması - bir görüntünün belirli alanlarını seçerek her bir bölgeyle ilgili sıkıştırma seviyesini uygulama.

Bunlar bant genişliğini önemli ölçüde azaltabilir, ancak kullanıcının baktıklarını anlaması için dikkatli kullanılmalıdır. Akıllı kodlama teknikleri, bir kurulumda kamera sayısını azaltarak maliyetleri düşürür.

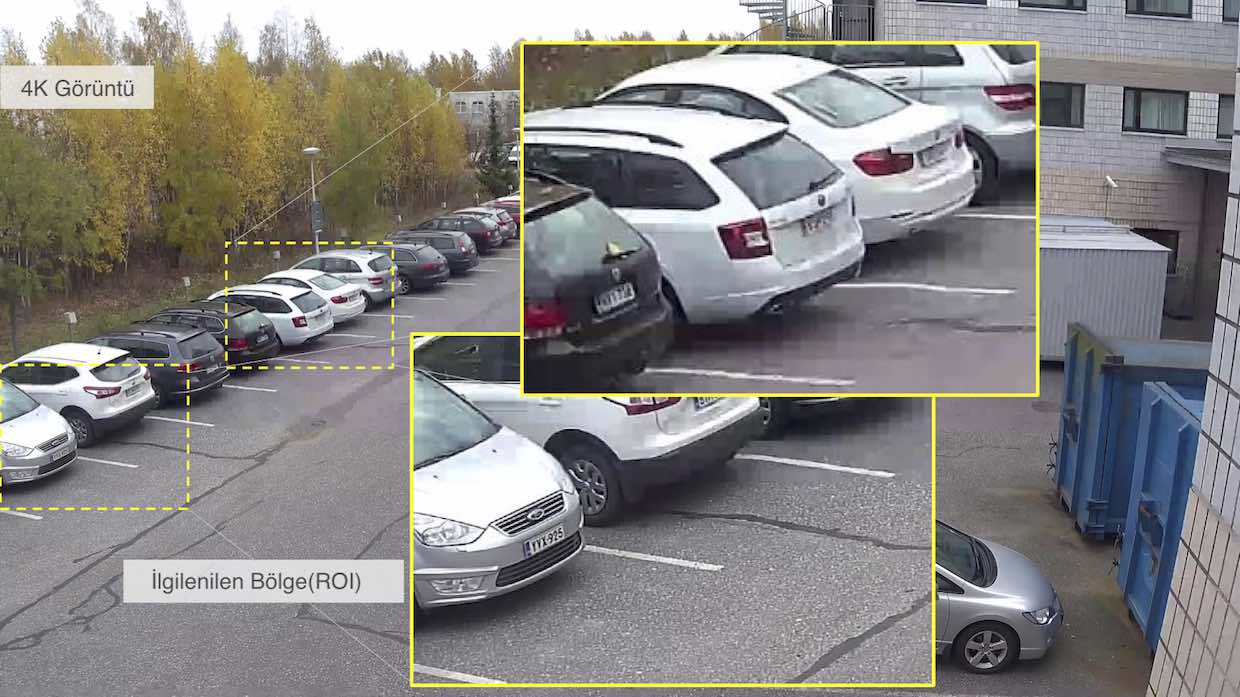

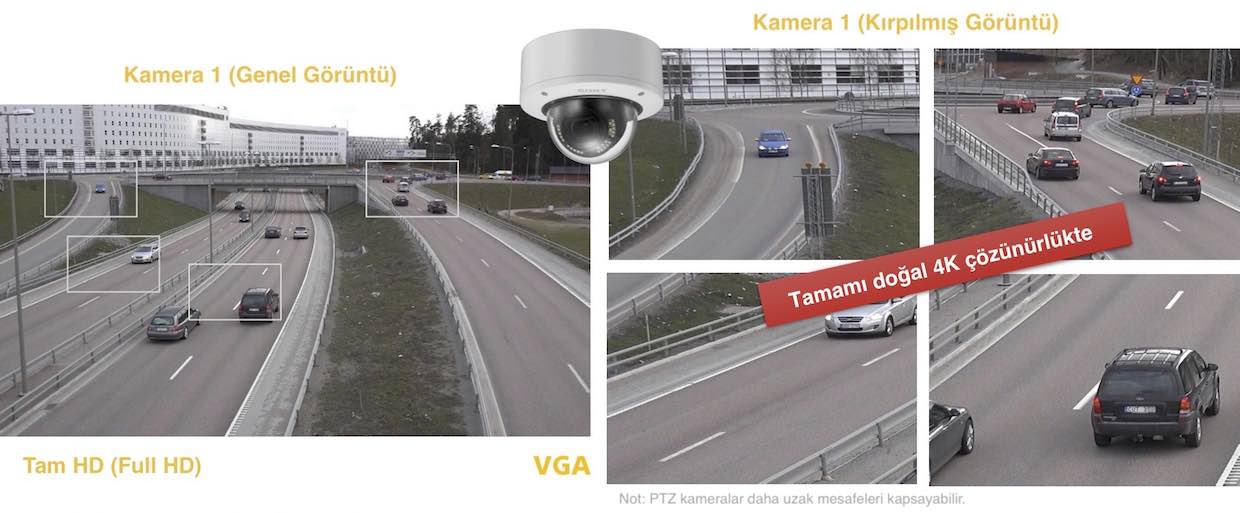

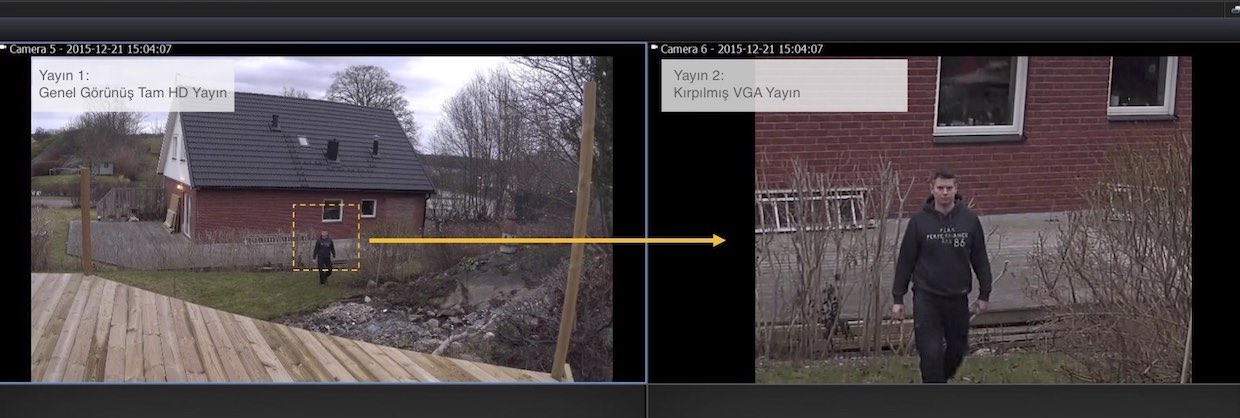

Birçok uygulama yüksek çözünürlük gerektirir, ancak sadece görüntünün seçilen alanları içindir. Standart uygulama, örneğin sabit bir genel bakış ve yakın bir kamera veya PTZ gibi birden fazla kamera kullanmaktır.

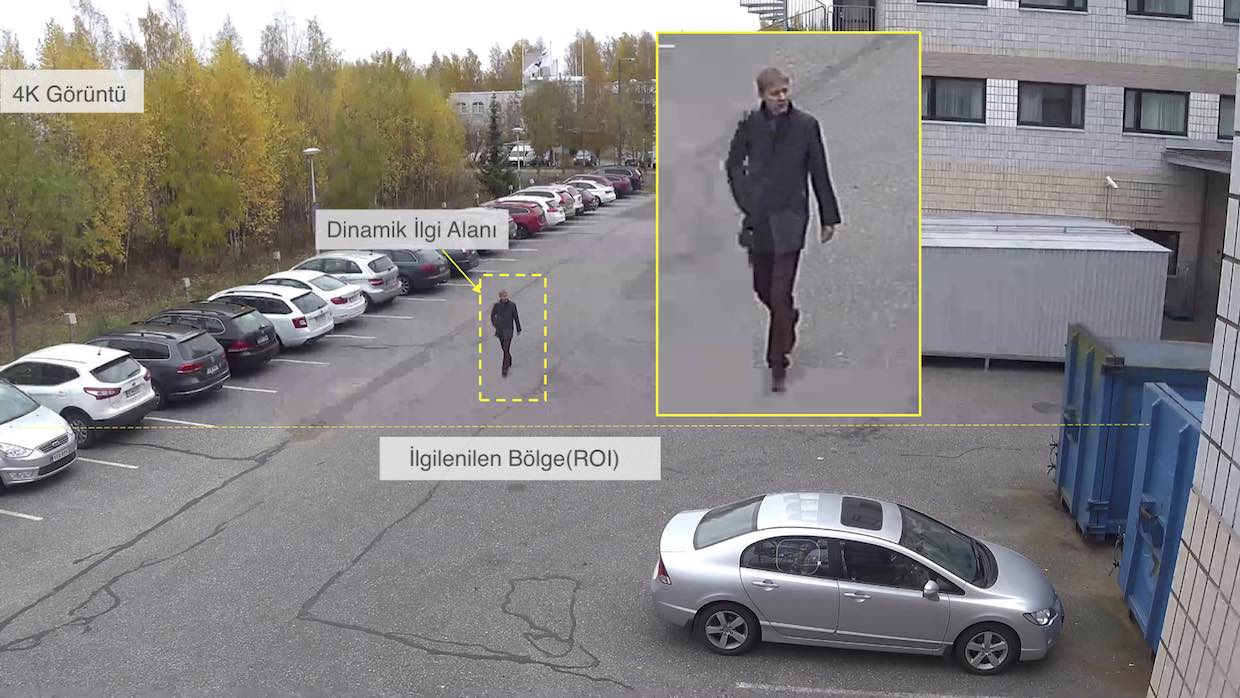

Sony 4K kameralar akıllı kırpma özelliği sunar: Bir yüksek çözünürlüklü kamera 5'e kadar akış sağlar (bir genel bakış, artı 4 kırpılmış alan). Tek bir kameradan sürekli genel bakış ve yüksek çözünürlüklü yakın çekimler sağlar.

1 Adet SNC-VM772R ile Çekilmiştir.

1 Adet SNC-VM772R ile Çekilmiştir.

HAREKET ALGILAMA İLE AKILLI KIRPMA

Kırpmayı ve VMD teknolojisini birleştiren Sony, dinamik kırpma sağlar. Bir genel görüntü akışı sürerken, aynı anda 4 nesneye eşzamanlı olarak hareket algılaması ile bu alanlar akıllı kırpmayla yayınlanabilir. Hareketli PTZ kameralarda otomatik izleme sistemi sadece bir hedefi izleyebilir ve izleme sırasında genel görüntü açısı kaybedilir.

SONUÇ

Hangi çözünürlük ve ne kadar bant genişliği ayrılacağına karar vermeden önce kamera uygulamasını anlamak çok önemlidir.

- Algılama için (Detect): Düşük çözünürlük ve düşük bant genişliği kabul edilebilir.

- Gözlemleme için (Observe): Orta çözünürlük uygundur ve bant genişliği oranla düşük olabilir.

- Tanıma için (Recognize): Daha yüksek çözünürlük ve daha az sıkıştırma gereklidir.

- Belirleme için (Identification): Yüksek çözünürlük ve düşük sıkıştırma en iyisidir.

Not: Unutmayınız ki mbp (ppm) cinsiden çözünürlük kameradan olan uzaklıkla ters orantılıdır. Görüntülenmek istenilen alan veya nesnenin kameradan olan uzaklığı arttıkça ppm cinsinden çözünürlüğü azalacaktır.

Gerçek mbp(ppm)’yi bir görüntü ile deneyerek onaylamak, insanları gerçek bir durumda tanıyabilmeniz ve belirleyebilmeniz için en iyi yoldur. Bu tür denemelerden, kameranın tipini, lensi ve sıkıştırma seviyesini doğrul bir şekilde seçebilirsiniz. Ayrıca, hareket olmayan zamanlarda bant genişliğini azaltmak ve daha fazla ayrıntı gerektiğinde artırmak için kamera profilini farklı zamanlarda değiştirebilecek bir yapı kurmak önemli yararlar sağlayacaktır. Örneğin, bir depoda, çalışma saatlerinde, tüm kameralarda “Belirleme” gerekebilir. Ancak çalışma saatleri dışında “Algılama” veya “Gözlemleme” çoğu kamera için yeterli olacaktır. Görüntülü Güvenlik sistemlerinde bant genişliği ve çözünürlük arasındaki bağlantıyı anlama, sistemleri ekonomik ve rekabetçi bir şekilde tasarlamayı sağlar. Kişi ve kurumların bu uygulamalar için ayıracağı bütçeyi azaltır. Doğal olarak ülke varlıklarının korumasına katkı sağlar.

Bu yazının pdf sürümünü aşağıdaki bağlantıdan indirebilirsiniz.